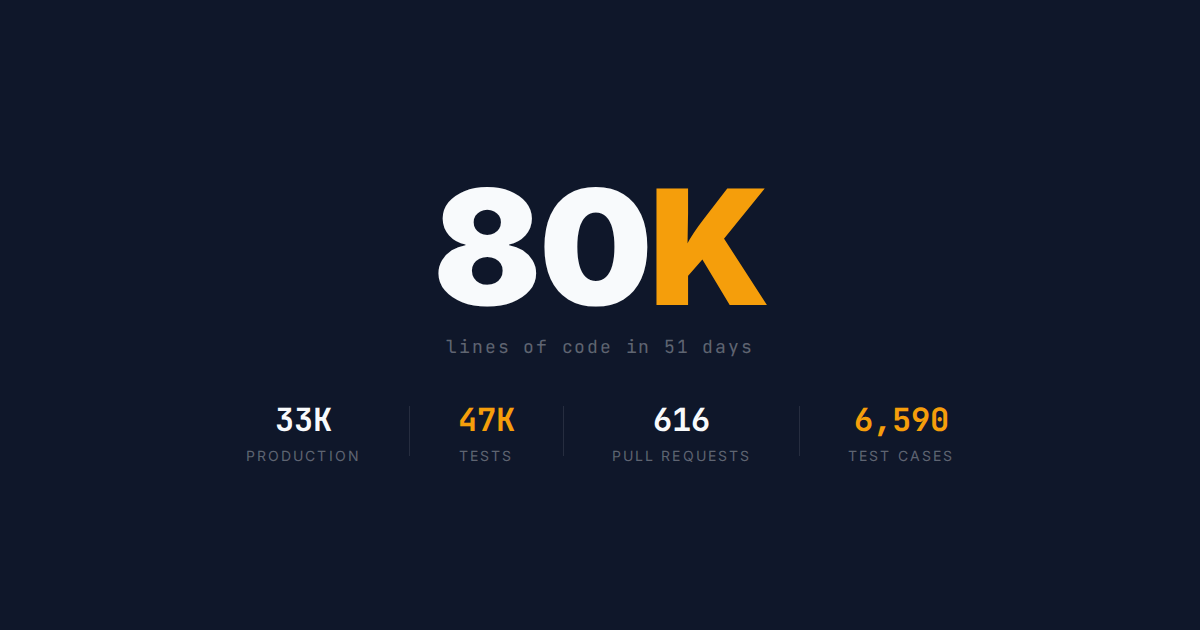

80,000 Lines of Code in 51 Days

Four open-source workflow commands, the counterintuitive lesson behind them, and a question: what does your process look like?

Early on, I tried running multiple Claude agents in parallel. Hand them separate issues, let them all implement simultaneously, merge everything at the end. On paper, this should have been the optimal approach. AI writes code fast. More agents means more throughput. Simple math.

It made things worse.

Manasight is a desktop companion for MTG Arena: Tauri, Rust, TypeScript, Astro. One developer. The last post covered testing. This one covers building: how GitHub issues become merged pull requests without me writing the code.

The project now has roughly 80,000 lines of code across six repositories. 33,000 lines of production code, 47,000 lines of tests. 616 merged pull requests. Around 6,590 test cases. 51 days.

Why parallel agents made things worse

First, I couldn't keep track of what each agent was doing. Each agent needed small corrections, and those corrections compounded when agents were working from stale context. By the time one agent finished, others had already changed the code it was building on.

Second, merging was painful. Each agent had worked from a different snapshot of the codebase, so figuring out how the changes interacted was harder than reviewing them one at a time.

Claude writes code so fast that running agents in parallel barely improves total wall-clock time. The long pole isn't implementation. It's human review and QA. Sequential processing is slower on paper but faster in practice because the human can actually think.

The lesson: optimize for the human's reasoning capacity, not the AI's coding speed.

Four Claude Code commands, published as open source

I built four workflow commands around that lesson. They're the system behind those 616 merged PRs, and I've open-sourced them at manasight-claude-commands.

I don't know if these patterns generalize beyond one project. This is still evolving, and the best way to pressure-test it is to put it in front of other developers.

The rest of this post walks through the system. The thinking behind the commands is the part I hope is useful regardless of whether you ever use the commands themselves.

Here's how the four commands fit together:

/issue-to-pr The atomic unit. One issue in, one reviewed PR out.

|

+-- /self-review The review gate. 5 parallel agents score findings

| by confidence, filtering false positives.

|

/sequential-issues Chains /issue-to-pr across multiple issues.

| Each PR stacks on the previous branch.

|

+-- /merge-stack Collapses the stack. Retargets all PRs to main,

rebases unique commits, squash-merges in order.

Specs before code

The commands don't work without a prerequisite: the work has to be well-specified before Claude touches it.

Every PR starts with a fully specified GitHub issue. Depending on the size of the change, the issue might be backed by a feature spec, an architecture decision record, and/or a research doc — but the specificity lives in the issue itself.

Before implementation, I prompt Claude to review the issue and tell me if it's ready to implement. This is the Implementer/Reviewer pattern applied outside of code: Claude flags ambiguity, missing acceptance criteria, or unstated assumptions before any code gets written. Under-specified work produces code I have to throw away.

During planning, I ask Claude to break work into the fewest issues possible while keeping each under 200 lines of code (the median PR lands at 243). Claude produces noticeably better work at this scope. Above it, I find myself reworking or throwing away PRs. Below it, I haven't. Small, stacked changes are also easier for the human to reason about — reviewing is still my job, and small diffs make that job possible.

Test code outweighs production code by 144%. My last post covered the QA philosophy behind that number.

/issue-to-pr

The atomic unit. One GitHub issue URL in, one reviewed PR out (source).

Five phases:

- Research. A sub-agent explores the codebase to understand the relevant code paths. This runs in isolation so the search results don't consume the parent's context window.

- Implementation. Write the code, write the tests, run the pre-commit checks defined in the project's CLAUDE.md.

- PR creation. Branch, commit, push, open the pull request.

- Self-review. A fresh-context agent examines the PR. This is the Implementer/Reviewer pattern built directly into the workflow. The review agent has no memory of the implementation decisions. It reads the diff cold, the same way a teammate would on a real pull request.

- Iteration. If the review finds issues, the implementation agent fixes them and the reviewer runs again. Up to 10 rounds. Then it's ready for human review.

Most PRs take one to three review iterations. The common findings: missing edge case tests, inconsistent error handling, a function that could be simplified. The things a human reviewer catches too, except this runs automatically on every PR regardless of how tired I am or how late it is.

/sequential-issues

My preferred workflow for planned work (source). It chains /issue-to-pr across a list of issues using stacked branches: each PR targets the previous one's branch, so each diff shows only its own changes.

Two gates per issue, both mandatory:

- Code review passes (iterative, using

/self-review) - CI green (tests, linting, formatting all pass)

The human's work is front-loaded and back-loaded. Front-loaded: planning, writing specs, ordering the issues. Back-loaded: reviewing the PR stack, running QA. The middle is autonomous.

This is where the Implementer/Reviewer pattern scales. Every issue in the sequence gets independent fresh-context review. The fifth PR in a stack gets the same review rigor as the first, because each review agent starts from scratch. No fatigue, no "I already looked at this codebase today" shortcuts. That's something I can't match as a human reviewer at 11 PM on a Tuesday.

/self-review and /merge-stack

(self-review source | merge-stack source)

/self-review started as a fork of Anthropic's /code-review plugin. Five parallel agents examine the PR independently, each finding scored by confidence. Only findings above a threshold surface in the feedback. Without that filtering, too many false positives and you stop reading the comments.

Two things I changed. First, I lowered the confidence threshold from 80 to 75. Second, Anthropic's version won't re-review a PR it's already reviewed. Mine will. In the autonomous loop, /self-review runs after each round of fixes, and it occasionally catches something on the second or third pass that it missed the first time. Because no human is watching the middle of the loop, I'd rather surface more findings and re-review than skip checks and let bugs into the product.

/merge-stack collapses a stack of reviewed PRs into main. It retargets each PR, rebases the unique commits, and squash-merges in order. The bookkeeping that's tedious to do by hand but straightforward to automate.

Both are documented and open-sourced alongside the other two commands.

Your turn

A few weeks ago, frankfaustino found the Manasight parser repo, filed issues, and merged bug fixes. The first outside contributor. Thank you, keep them coming!

All four commands are at manasight/manasight-claude-commands with <!-- CUSTOMIZE --> markers showing what to adapt. If you've built something different, I'd love to hear about it. The companion repo has Discussions enabled for exactly this.

Optimize for the human. The AI will keep up.

I'm Tim. Subscribe to get new posts by email, or follow @manasightgg on Twitter and @manasight.gg on Bluesky.

Manasight is not affiliated with, endorsed by, or sponsored by Wizards of the Coast or Hasbro.